The Future of SEO is on the Edge

If you own a site today, you face a problem.

You've spent months, years, or maybe even decades optimising your site. Both for humans, to make sure your site speaks your brand's language, and for SEO crawlers, to make sure your site ranks.

But now there's an additional challenge, to make sure that your site is intelligible for the myriad of AI crawlers that scrape it every minute of every day (yes, that often).

Optimising a site for AI crawlers can often pull you in another direction to what you've been doing to optimise for humans and SEO:

- Whereas humans and SEO crawlers are fine with Javascript, AI crawlers don't render it at all, giving them an entirely different view of your site

- Whereas SEO rewards rich content, AI crawlers just want short, concise, immediate answers.

So, what do you do? It seems like it's impossible to build a site that's optimal for humans, SEO crawlers, and AI crawlers simultaneously. Or is it?

Cue Edge SEO

I've spoken to SEOs with years of experience in the field that have never heard of the phrase Edge SEO, and you can't blame them. It's a niche concept, and it's a little technical, so I'll try to break it down as simply as possible.

The most basic model of how you access a website is that you type that website's domain into your browser bar, this sends a request to the website's server. The server then sends information (HTML, Javascript, all that good stuff) back to your browser for display, and you get to see the site.

While this is a helpful model, it's not actually how most websites operate.

Most modern websites use something called a CDN, the most popular being Cloudflare, to act as an intermediary between you and the website's server. Cloudflare intercepts your request as soon as it happens, and tries to serve you a cached (i.e. preprocessed, compressed, saved) version of the site, from a server that's close to you. This is great for everyone involved - you get a faster page load, and the site owner has fewer requests hitting their server - wins all around!

CDNs allow for something called edge compute - the ability to write lightning fast code which is executed in the milliseconds just as they receive a request. Edge compute allows you to do pretty much whatever you want with the request; you could use it to reject certain incoming requests, or modify all HTML which gets sent back with custom edits. It gives you complete control over how your site responds to requests, all without having to log into a CMS or actually edit your site.

Back to SEO

So, what's Edge SEO?

Well, it's the practice of using edge compute to modify the site content that gets sent back to specific requests. Some common examples are:

- Internationalisation

Modifying the content that gets sent back to users and crawlers, depending on the specific location that they're in. You might want to serve different schema markup to different countries, or different meta tags to different locations. - Technical SEO fixes

You might use edge compute to check your HTML for any critical errors before it gets sent to users and crawlers, and insert fixes on the fly.

In fact, when you loaded this very page you actually experienced Edge SEO first-hand. I used Cloudflare's edge compute to take requests to trakkr.ai/blog/, and respond with content that actually lives at blog.trakkr.ai. Nothing really lives at the URL you're currently visiting; it actually lives here, and I've used Edge SEO to account for the fact that sub-path blogs tend to rank better than ones hosted on subdomains.

These are all are great examples of SEO executed at the edge, as they allow a site owner to write one piece of logic on their CDN that covers all requests, rather than having to go and edit or maintain every single page on their site.

And yet, despite the potential for Edge SEO, it's never really caught on. It's certainly more difficult to implement than just modifying a page directly, and there's never been a huge impetus to invest in it, until now.

Now is the time for Edge SEO

We discussed earlier how site owners are being pulled in different directions by trying to optimise for humans, SEO, and AI all at once. Well, this is a problem that Edge SEO is perfectly positioned to solve.

Edge compute allows you to intercept and route them differently depending on whether they're from humans and traditional SEO crawlers on the one hand, or AI crawlers on the other.

The former receive your usual page content, straight from your server, whereas AI crawlers can receive a version of your site that's been optimised for AI understanding. This helps them digest and understand your site, making them more confident in citing it in AI conversations, and ultimately winning you traffic and visibility.

Trakkr Prism

I've been building a first-of-its-kind system inside of Trakkr to allow site owners to do just this. It's called Prism, and it's a small piece of code that you deploy inside your CDN.

It handles human and SEO crawler requests just as normal, adding on only a few milliseconds of extra latency.

But for AI crawler requests, those that come from the likes of OpenAI's ChatGPT, or Anthropic's Claude, it takes a different path entirely. For these crawlers, it serves a cached, compressed, and optimized version of your site's pages.

The crucial differences are:

- Pre-rendering

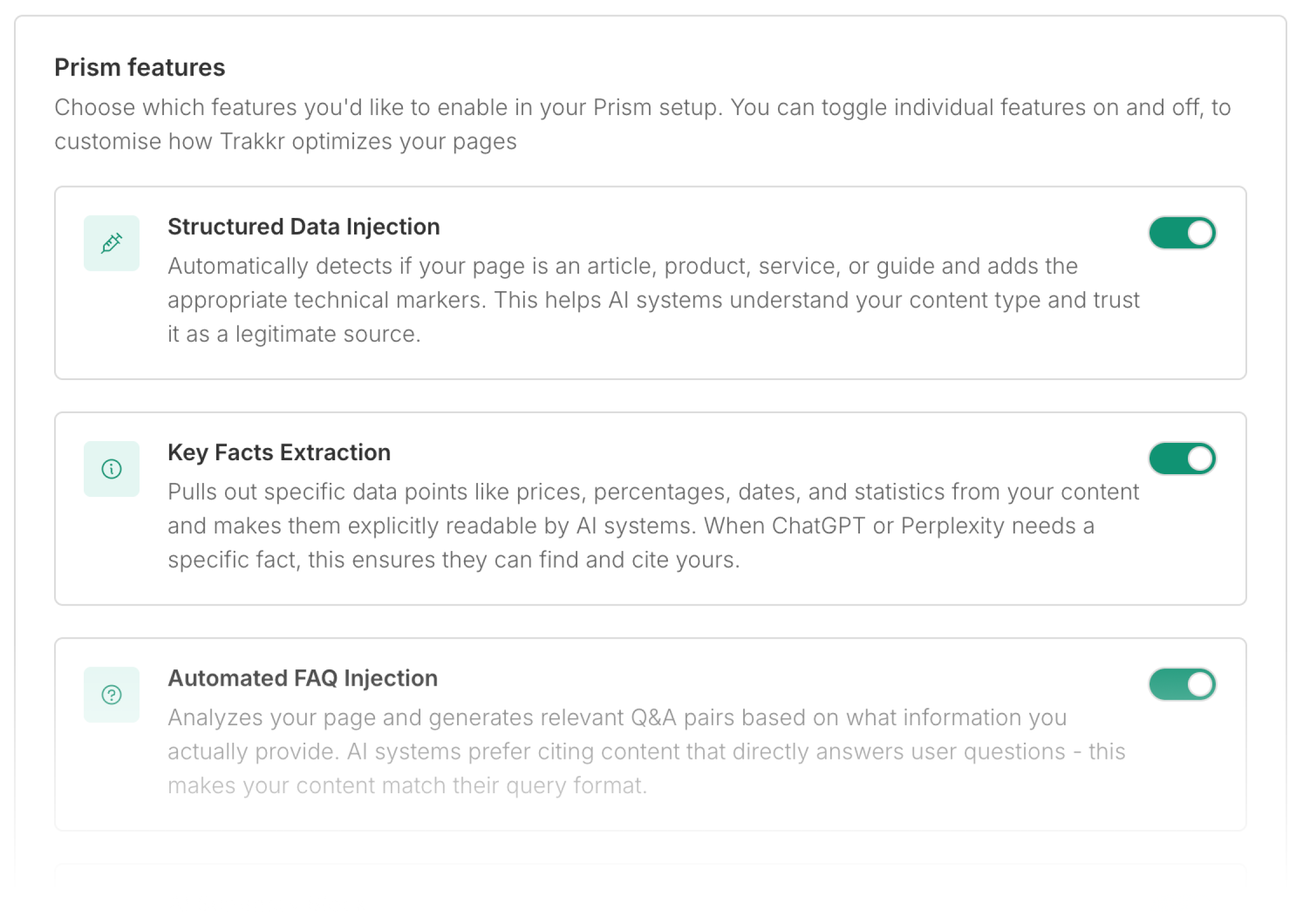

AI crawlers don't execute Javascript, which means that Javascript heavy sites appear completely empty to them. Prism caches a pre-rendered version, essentially letting AI crawlers see the full content that's on your site. - AI Optimisation

Prism injects dense, concise metadata into your page head. Being one of the first things that an AI crawler reads, this means they get an immediate snapshot and summary of the page's content, and improves their understanding. - Lightning Fast

Prism strips out all unnecessary content that AI crawlers can't render, letting them immediately parse and learn from the content that matters. This speeds up crawl times, with responses typically below a tenth of a second.

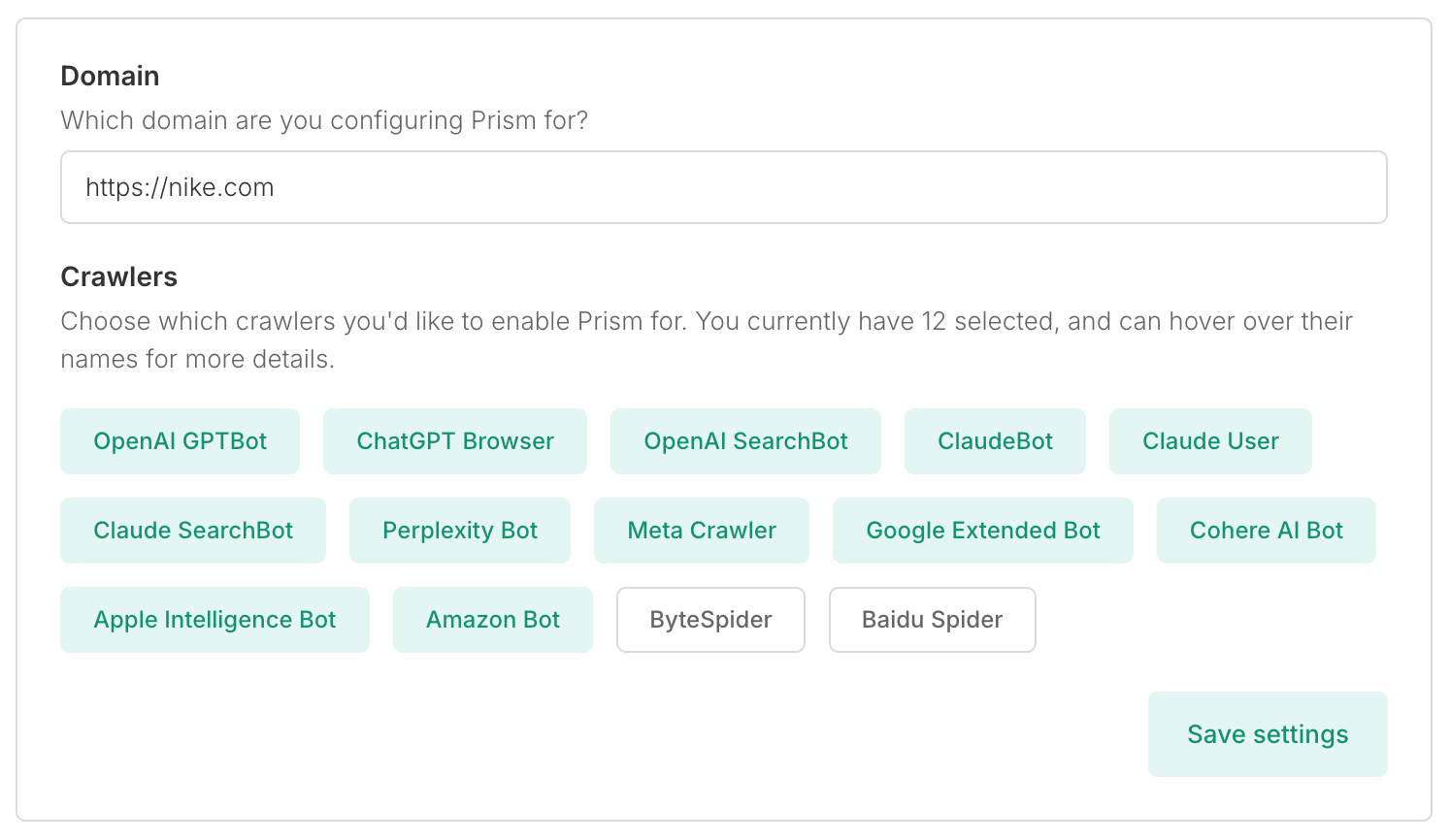

What's more, Prism adapts to your needs. Start with defaults that just work, or choose exactly which AI crawlers to optimize for – ChatGPT, Claude, Perplexity, or any combination.

Control every aspect of optimization – from FAQ generation to schema injection. Each feature toggles independently, making it as simple or powerful as you need.

What's next

Prism has been running in beta for the past two months, serving hundreds of thousands of requests per day. The results have been pretty clear - AI crawlers can finally see what's actually on Javascript-heavy sites, and those sites are getting cited more often in AI responses.

Prism is launching publicly next week for anyone with a site on Cloudflare. It's a single deployment to your CDN, takes about 10 minutes to set up, and then it runs automatically. Your human visitors and Google see your site exactly as before. AI crawlers get a version they can actually parse.

The reality is that a whole bunch of AI crawlers have probably hit your site in the time you've read this, trying to understand your content. Most of the sites I've seen have more crawler traffic than human traffic, and Cloudflare's own data highlights this. With AI referral traffic growing over 500% year-on year, the gap between sites that AI can understand and those it can't is only going to grow.

If you're using modern Javascript frameworks, they're probably not seeing much. And if you're prioritising human traffic and SEO crawlers, then they're probably struggling to understand your content. That's traffic and visibility you're missing out on, not in some future scenario, but right now.

Edge SEO made sense before, but there was never quite enough reason to invest in it. Now there is. You can keep maintaining three different versions of your site for three different audiences, or you can let Prism handle AI crawlers for you.